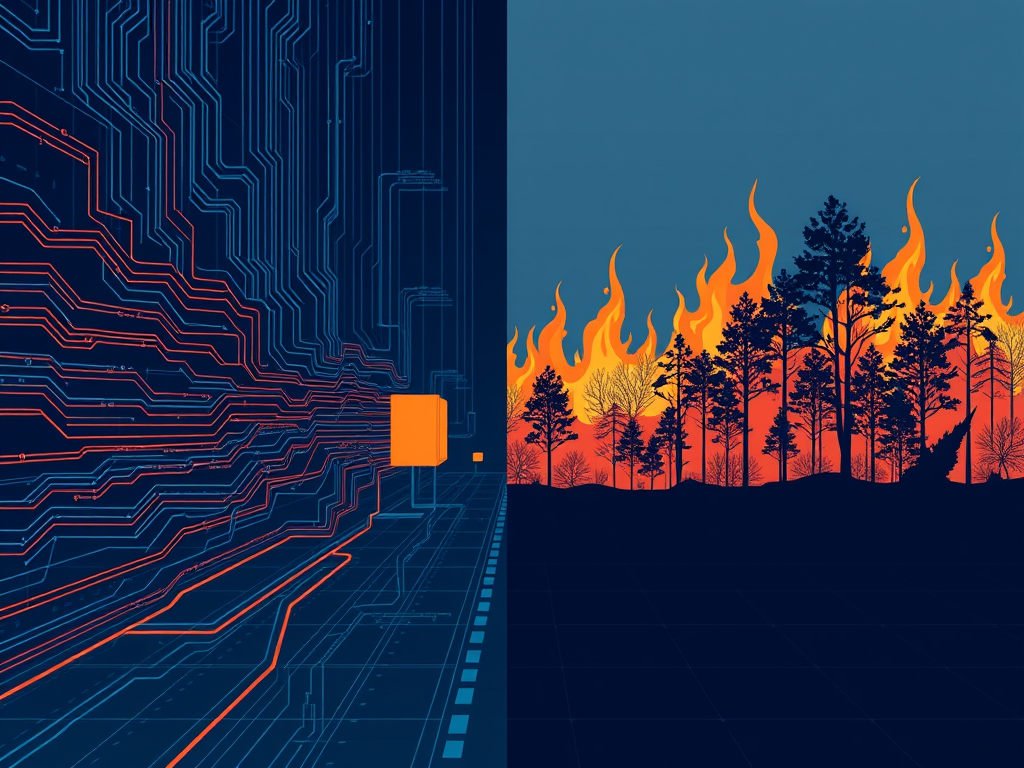

I spent time this week writing about the gravitational pull that drags defense AI companies from defensive applications toward offensive ones.

Yesterday, the Secretary of Defense declared Anthropic a “supply chain risk” because they won’t enable mass surveillance or autonomous kill chains.

Today weekend, I built the engineering solution both sides actually need.

Today’s Portland Claude Code hackathon runs all day. One-day sprint, solo or teams, build anything that meaningfully integrates Claude. I went in solo with a problem I’ve been thinking about since I left defense AI work.

The problem: how do you let the Pentagon use AI for missile defense at machine speed — without requiring a phone call to a CEO — while simultaneously blocking mass domestic surveillance in a way nobody can override?

The answer is the same pattern already proven at scale in production infrastructure.

Policy-as-code enforcement.

The Pattern Already Exists

Kubernetes admission controllers evaluate thousands of API requests per second against pre-negotiated policies. No human intervention. No phone calls. No exceptions for urgent deployments.

The container either passes the security policy or it doesn’t deploy. Period.

OPA (Open Policy Agent) does the same thing for authorization decisions across distributed systems. Rules are code. Evaluation is automatic. Audit trails are complete.

This isn’t theoretical. This is running in production at scale, right now, protecting infrastructure that handles hundreds of millions of requests.

The same pattern works for LLM deployments.

What I Built

Firebreak is a policy enforcement proxy that sits between an LLM consumer and the API endpoint.

Every request gets intercepted. Intent gets classified. Policy gets evaluated. The decision executes automatically.

ALLOW— the prompt passes through. Standard audit logging.

ALLOW_CONSTRAINED— the prompt passes through with enhanced logging and notifications to legal counsel.

BLOCK— the prompt is rejected. The LLM never sees it. Critical alerts fire to trust & safety, inspector general, whoever was specified in the policy.

Everything is logged to an immutable audit trail.

The policies themselves are YAML files. Version-controlled, testable, deployable. Both sides negotiate them once. Neither side can unilaterally change them.

Missile defense? Pre-authorized. Passes through at machine speed. No phone call required.

Mass surveillance? Hard blocked. Doesn’t matter who’s asking or how urgent the situation is. The infrastructure says no.

Why This Matters

I wrote about this pattern in my piece on defense AI drift. The technical infrastructure for monitoring and the infrastructure for targeting are often the same. The sensor fusion architecture that detects threats can identify targets. The data analysis platform that summarizes intelligence can build pattern-of-life profiles.

What changes is the intent — and without infrastructure-level constraints, that intent drifts.

Policy documents don’t hold. I’ve seen it happen. The documents exist, everyone agrees to them, then operational urgency overrides them. The drift happens through a series of scope expansions that each seem reasonable in isolation.

Infrastructure constraints create friction. Friction creates accountability.

The Pentagon’s complaint that they can’t be “beholden to a private company” during a crisis is legitimate. You can’t have national security dependent on whether a CEO answers their phone.

Anthropic’s position that they can’t allow unrestricted use for surveillance and autonomous weapons is also legitimate. Those are real red lines that exist for good reasons.

These positions aren’t incompatible. They just need the right infrastructure layer between them.

The Demo

Seven scenarios run through the system in the demo. Intelligence summarization — allowed, standard audit. Farsi translation — allowed, standard audit. Court-authorized surveillance with a valid warrant — allowed with constraints, legal counsel notified.

Mass domestic surveillance? Blocked. Trust & safety and inspector general alerted.

Autonomous targeting recommendations? Blocked. Three alert targets notified immediately.

The whole thing runs as either an interactive TUI demo or as an OpenAI-compatible proxy server. Point any compatible client at it, and the policy enforcement happens transparently.

I’ve got it working with Cursor IDE through ngrok. Same enforcement layer, same audit trail, zero changes to the developer experience.

Whether this makes it to the final ten at the hackathon is anyone’s guess.1Pencil’s down is in a half hour. My fingers are crossed! There are maybe 150 participants and that’s a long shot.

But the point isn’t winning a hackathon. The point is proving the pattern works.

The technology itself isn’t hard. The hard part is getting both sides to agree on the policy. Once they do, Firebreak2… or a solution like it. makes sure the agreement holds.

I’ve seen what happens when the line doesn’t hold. I’ve participated in the drift. I’ve made the decisions that seemed reasonable at the time and looked different six months later.

This time, I built the infrastructure I wish had existed then.

The code’s on GitHub. The demo video’s on YouTube. Anyone who’s serious about solving this problem now has a reference implementation to start from.

That’s more than worth it.

- 1Pencil’s down is in a half hour. My fingers are crossed!

- 2… or a solution like it.